Specialization

Responsibility, Free Will, Ethics of AI, Applied Ethics (esp. Environmental Ethics)

Competencies

Metaphysics, Epistemology, Classical Islamic Philosophy, Political Philosophy

Employment

2024 - Present

Harvard University, Department of PhilosophyPostdoctoral Fellow in Philosophy

2023-2024

Chapman University, Smith Institute for Political Economy and PhilosophyPostdoctoral Researcher

Education

2016 - 2023

Syracuse UniversityPhD Candidate & Teaching Associate

Dissertation: Responsibility Internalism and Responsibility for AI

Committee: Sara Bernstein, Ben Bradley (primary), Mark Heller, Hille Paakkunainen

2015-2016

SUNY FredoniaPostbaccalaureate in Philosophy

2004-2009

Firat UniversityBS in Computer Science Teaching

Publications

Forthcoming

'Against the Degree-Scope Response to Moral Luck, or A Farewell to Responsibility for Consequences'

Forthcoming

'Take a Stand, You Don't Have to Make a Difference'

2025

'AI Responsibility Gap: Not New, Inevitable, Unproblematic'

2024

'Drawing a Line: Rejecting Resultant Moral Luck Alone'

2022

'Moral Responsibility is Not Proportionate to Causal Responsibility'

2022

'Against Resultant Moral Luck'

2022

'Causation Comes in Degrees'

Public Philosophy

2020

‘Epistemic Injustice’

Talks

Speaker (*=refereed, +=invited)

'Responsibility Doesn't Require Alternative Possibilities'

- +SPAWN Returning Home: Ethics at Syracuse, Syracuse University (July 2025)

- *American Philosophical Association, Central Division (Feb 2025)

- Faculty Research Presentations, Harvard University (Oct 2024)

- Responsibility Workshop, Chapman University (Apr 2024)

‘AI Responsibility Gap: Not New, Inevitable, Unproblematic’

- AI and Data Ethics Workshop, Northeastern University (July 2024)

- *Midwest Ethics Symposium: Ethics and AI, The Prindle Institute for Ethics (Apr 2024)

- *Penn-Georgetown Digital Ethics Workshop, University of Pennsylvania (March 2024)

- Brown Bag Workshop, Chapman University (March 2024)

‘Current Debates in Ethics of AI and Technology’

- +Department of Computer Engineering,

Kütahya Health Sciences University (Dec 2024)

‘Take a Stand, You Don’t Have to Make a Difference’

- +SOPhiA 2023: Collective Harm and Responsibility in the Climate Crisis, University of Salzburg (Sep 2023)

- *Young Philosophers Read-Ahead Conference, DePauw University (Jan 2023)

- *International Society for Environmental Ethics, American Philosophical Association, Eastern Division (Jan 2023)

- *Young Philosophers Lecture Series, DePauw University (Sep 2022)

‘Drawing A Line, Rejecting Resultant Moral Luck Alone’

- *American Philosophical Association, Pacific Division (Apr 2023)

- *Free Will, Moral Responsibility, and Agency, Florida State University (Feb 2023)

- ABD Workshop Series, Syracuse University (Oct 2022)

‘Wrong but Praiseworthy, Right but Blameworthy’

- *Rightness, Ignorance, Uncertainty, and Praise Workshop, University of Southern California (June 2022)

- *72nd Annual Meeting of the New Mexico Texas Philosophical Society, Baylor University (Apr 2022)

- ABD Workshop Series, Syracuse University (Feb 2022)

’Causation Comes in Degrees’

- *American Philosophical Association, Eastern Division (Jan 2022)

- *Society for the Metaphysics of Science, 6th Annual Conference (Sep 2021)

’Against Resultant Moral Luck’

- *Summer School on Causation and Responsibility, University of Bern (July 2021)

- *Great Lakes Philosophy Conference—Ethics in Action, Siena Heights University (Apr 2021)

- +Philosophical Society of Fredonia, SUNY Fredonia (Nov 2020)

- *94th Joint Session of the Aristotelian Society and the Mind Association, University of Kent (July 2020)

- *International Conference on Ethics, University of Porto (June 2019)

‘Moral Responsibility Is Not Proportionate to Causal Responsibility’

- *American Philosophical Association, Eastern Division (Jan 2021)

- ABD Workshop Series, Syracuse University (Feb 2020)

- *AGENT, Ethics and Normativity Talks, University of Texas at Austin (Nov 2019)

- *20th Annual Pitt-CMU Graduate Student Philosophy Conference, University of Pittsburgh & Carnegie Mellon University (March 2019)

‘Stocker’s Schizophrenia, Alienation, and a Solution’

- *Fundamentality in Philosophy, The 7th International Philosophy Graduate Conference, Central European University (Apr 2018)

‘Against Reliabilism: In the Face of Skepticism’

- *Northwest Student Philosophy Conference,

Western Washington University (May 2017)

Commentator

Mar 2025

On Selim Berker’s ‘How Your Vote Determines a Winner: On the Metaphysics of Voting’

Edmond & Lily Safra Center for Ethics, Harvard University

July 2024

On Kendra Chilson’s ‘Keeping Our Hands Clean? Autonomous Systems and Diversion of Responsibility’

AI and Data Ethics Workshop, Northeastern University

Mar 2023

On Itamar Weinshtock Saadon’s ‘Responsibility, Causation, and Reversing the Order of Explanation’

Syracuse Graduate Philosophy Conference

Feb 2023

On Joshua Tignor’s ‘Theorizing About Moral Responsibility As Such’

ABD Workshop Series 2021, Syracuse University

July 2022

On Jules Salomone-Sehr’s ‘Complicity: A Minimalist Account for Our Maximally Messy Social World’

Vancouver Summer Philosophy Conference

Apr 2022

On Peter Zuk’s ‘Reconciling Experiential Theories of Pleasure’

72nd Annual Meeting of the New Mexico Texas Philosophical Society, Baylor University

Apr 2022

On Hannah Winckler-Olick’s ‘Simone de Beauvoir on Value-Creation as a Mode of Complicity’

Centennial Conference of the Creighton Club

Jan 2022

On David Sackris and Rasmus Rosenberg Larsen’s ‘Are There Moral Judgements?’

APA Eastern Division Meeting 2022

Oct 2021

On Joshua Tignor’s ‘Moral Growth and Moral Responsibility’

ABD Workshop Series 2021, Syracuse University

July 2021

On Alex Kaiserman’s ‘Responsibility and the ‘Pie Fallacy’’

Summer School on Causation and Responsibility, University of Bern

Apr 2021

On Perry Hendricks’s ‘The Impairment Argument Reconsidered’

Syracuse Graduate Philosophy Conference

Mar 2019

On Caner Turan’s ‘On Greene’s Evolutionary Challenge to Deontological Ethics’

Syracuse Graduate Philosophy Conference

Works in Progress

A paper on moral rightness and wrongness versus moral praise and blame

Under Review

A paper about the flicker defense against Frankfurt-style cases

Under Review

'Responsibility Doesn't Require Alternative Possibilities'

Polished Draft

'(How) Does Accountability Require Explainable AI?'

Draft

Teaching

Harvard University (Modules Embedded into Undergrad/Grad Computer Science Courses)

Spring 2025

Ethics—Deep Integration (Co-created)

CS50: Introduction to Computer Science

Spring 2025

Ethics Bowl

CS1060: Software Engineering with Generative AI

Spring 2025

Distributive Justice

CS1360: Economics and Computation

Fall 2024

Ethics of Technological Unemployment

ES159/259: Introduction to Robotics

Fall 2024

Ethical Implications of Interpretability (Co-created & Co-run)

CS2822R: Topics in Machine Learning - Interpretability

Fall 2024

Ethics of Hacking Back

CS2630: Systems Security

Chapman University (Lead Instructor)

Spring 2024

PHIL303: Environmental Ethics

Syracuse University (Lead Instructor)

Spring 2022/23

PHI394: Environmental Ethics

Fall 2023

PHI191: The Meaning of Life

Spring 2020, Summer 2021/22/23

PHI251: Logic

Spring 2021

PHI383: Free Will

Winter 2021

PHI200: Happiness and Meaning in Life

Fall 2020

PHI197: Human Nature

Summer 2020

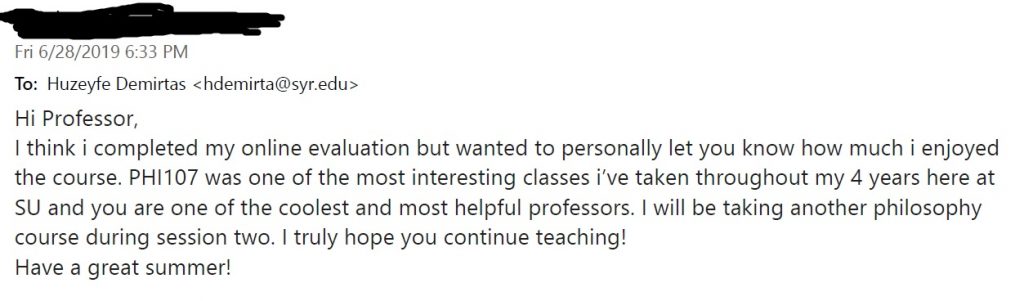

PHI107: Theories of Knowledge and Reality

Fall 2019

PHI192: Introduction to Moral Theory

Syracuse University (Teaching Assistant)

Fall 2021

Human Nature (Christopher Noble)

Spring 2019

Theories of Knowledge and Reality (Janice Dowell)

Fall 2018

Logic (Mark Heller)

Fall 2017

Introduction to Moral Theory (David Sobel)

Fall 2017

Introduction to Moral Theory (Hille Paakkunainen)

Spring 2017

Human Nature (Neelam Sethi)

Fall 2016

Theories of Knowledge and Reality (Robert Van Gulick)

Honors & Awards

2022

Summer Research Fellowship

Syracuse University

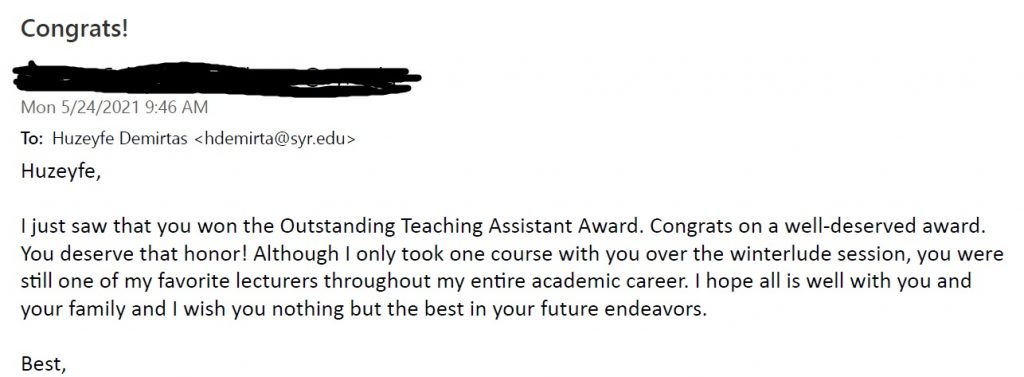

2021

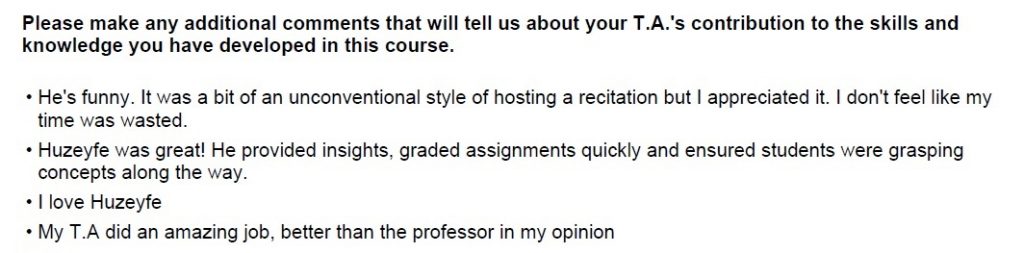

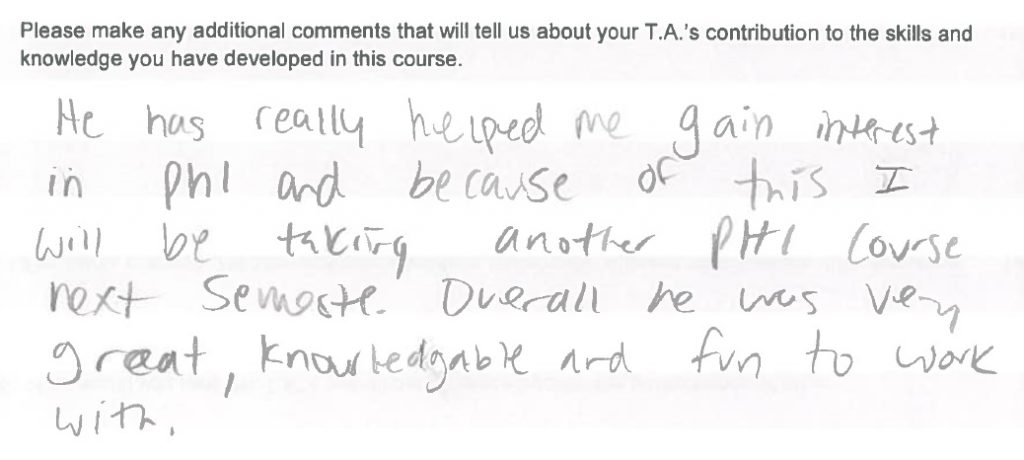

Outstanding Teaching Assistant Award

Syracuse University

2016

The Philosophical Society, Student Achievement Award

SUNY Fredonia

Service

Referee

American Philosophical Quarterly, Australasian Journal of Philosophy, Ergo, Erkenntnis, Ethics and Information Technology, European Journal of Philosophy, Journal of Philosophy, Journal of the American Philosophical Association, Synthese

Thesis Advising

Harvard University

Diana L. Yue's senior thesis, "Thinking Outside the Black Box: Justifying Beliefs in the Age of Opaque Autonomous AI Systems." (Spring '25)

Thesis Committee

Harvard University

Peter A. H. Jin's senior thesis, "Forgiveness, Atonement, and The Edge of Desert: Responding Morally to What We Don’t Deserve." (Spring '25)

Lead

Harvard, Embedded EthiCS Research and Engagement (Fall 2025)

Co-Lead

Harvard, Embedded EthiCS Teaching and Learning (Spring 2025)

Co-Lead

Harvard, Embedded EthiCS Research and Engagement (Fall 2024)

Co-Organizer

Responsibility Workshop, Chapman University (April 2024)

Judge

Southern California High School Ethics Bowl Competition (2024)

Senator

Syracuse Graduate Student Organization (2020-2021)

Co-Organizer

Syracuse Graduate Philosophy Conference (July 2020)

Graduate Coursework

Ethics (*=audit)

Moral and Political Philosophy (Hille Paakkunainen)

Constructivism in Metaethics (Hille Paakkunainen)

Anti-Realism and Pragmatism in Ethics (Nate Sharadin)

Anti-Theory in Ethics (Independent study with Hille Paakkunainen)

Ethics of Nudging (Independent study with Ben Bradley)

*Motivation (Hille Paakkunainen)

*Animal Ethics (Ben Bradley)

*Free Will (Mark Heller)

*Prudence (Ben Bradley)

Epistemology (*=audit)

Topics in Contemporary Epistemology (Nate Sharadin)

Language, Epistemology, Mind, Metaphysics (K. McDaniel, K. Edwards)

*Epistemology (Hille Paakkunainen)

Metaphysics

Beyond the Modal: Essence and Potentiality (Kris McDaniel)

Metaphysics of Ethics (Ben Bradley, Kris McDaniel)

Political Philosophy

Justice and Equality (Ken Baynes)

Philosophy of Social Sciences (Ken Baynes)

History of Philosophy

History of Philosophy (Frederick C. Beiser)

Classical Arabic Philosophy (Kara Richardson)

Logic and Language

Logic and Language (Michael Rieppel)

Concepts (Kevan Edwards)